![Black screen with white font subtitle at the bottom [sound of subtitles]](https://vitalcapacities.com/wp-content/uploads/2021/07/Screenshot-2021-07-11-at-18.26.07-1024x283.png)

![Black screen with white font subtitle at the bottom [music and sound fades]](https://vitalcapacities.com/wp-content/uploads/2021/07/Screenshot-2021-07-11-at-18.25.53-1024x278.png)

These two images are the beginning and the end of the video work titled [sound of subtitles].

![Black screen with white font subtitle at the bottom [sound of subtitles]](https://vitalcapacities.com/wp-content/uploads/2021/07/Screenshot-2021-07-11-at-18.26.07-1024x283.png)

![Black screen with white font subtitle at the bottom [music and sound fades]](https://vitalcapacities.com/wp-content/uploads/2021/07/Screenshot-2021-07-11-at-18.25.53-1024x278.png)

These two images are the beginning and the end of the video work titled [sound of subtitles].

![A block of text with black bold fonts on white background. It says the following- [soaring orchestra music] [sound of remembering fondly] [♪♪♪] [sound of shifting relationship] [mysterious string music] [sound of emptiness in the room] [dramatic theme swelling] [sound of communicating gently] [***] [sound of world shattering] [somber music] [sound of shaping context] [music gets faster] [sound of still silence] [music intensifies] [sound of whispering sweet nothings] [music and sound fades]. The musical one [♪♪♪] is in dark blue colour.](https://vitalcapacities.com/wp-content/uploads/2021/06/postcardimage-1024x576.jpg)

![Screen capture of video with white font subtitle with black background. All 3 stills of video from videos are identical and are placed side by side. They are black and white images of clay covered hands shaping a round shaped pottery on wheel. The person is wearing long sleeved shirt that is rolled up at lower arms. The images have different subtitles. On the left image it reads [turning], middle image reads [sound of remembering fondly], the right image reads [soaring orchestra music].](https://vitalcapacities.com/wp-content/uploads/2021/06/Screenshot-2021-06-29-at-17.42.29-1024x275.png)

![Screen capture of video with white font subtitle with black background. All 3 stills of video from videos are identical and are placed side by side. They are coloured images of clay covered hands shaping pottery on a wheel. These images have different subtitles. On the left video it reads [holding], middle video reads [sound of listening inward], the right video reads [♪♪♪].](https://vitalcapacities.com/wp-content/uploads/2021/06/Screenshot-2021-06-29-at-17.42.40-1024x277.png)

![Screen capture of video with white font subtitle with black background. All 3 stills of video from videos are identical and are placed side by side. They are coloured images of clay covered hands shaping brown pottery on wheel. The images have different subtitles. On the left it reads [shaping], the middle video reads [sound of emptiness in the room], and the right reads [mysterious string music].](https://vitalcapacities.com/wp-content/uploads/2021/06/Screenshot-2021-06-29-at-17.42.51-1024x277.png)

[turning] [sound of remembering fondly] [soaring orchestra music] [holding] [sound of listening inward] [♪♪♪] [shaping] [sound of emptiness in the room] [mysterious string music]

Audio will be silent in my piece for the final outcome of this residency- I aim for the subtitles to show the endless possibilities of what the words would sound like for the viewers.

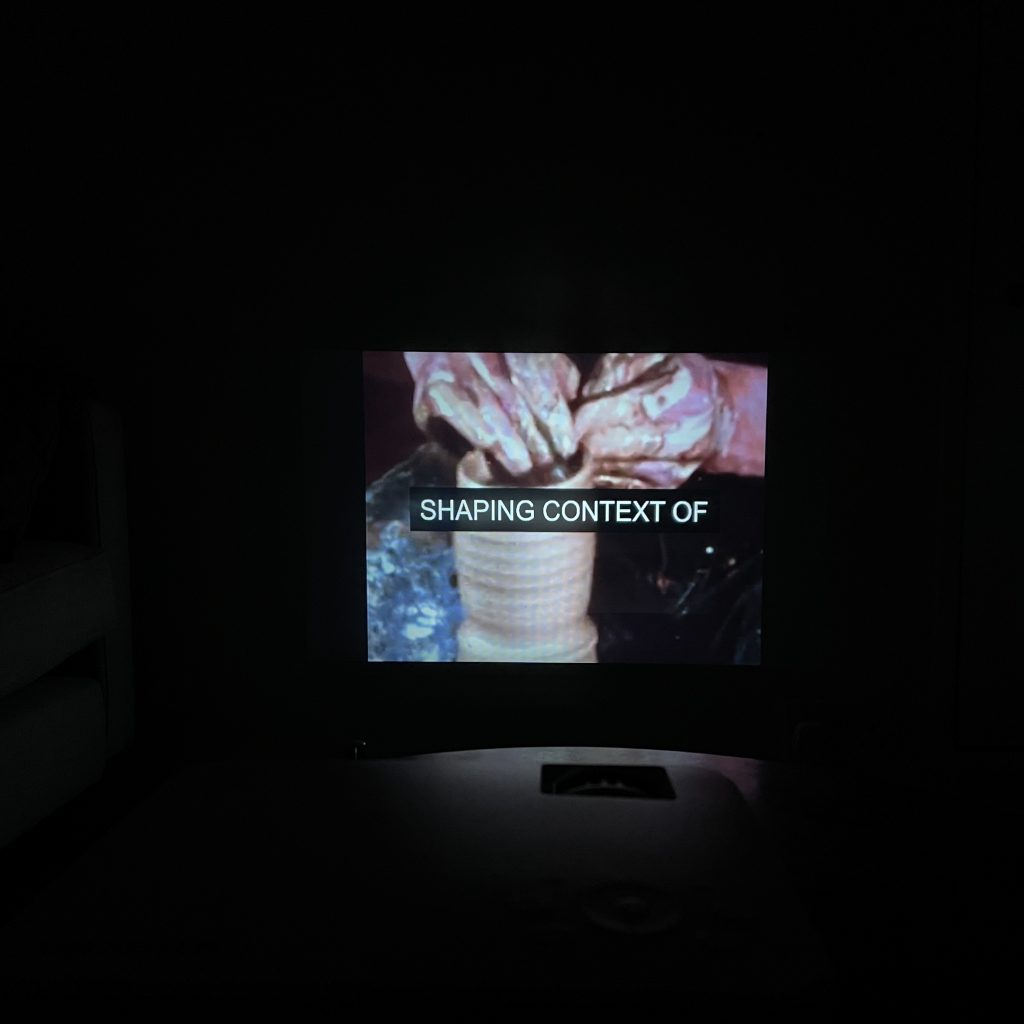

I have been experimenting with multiple videos using Premiere Pro and After Effects. It has been a daunting task to figure out the dimensions and creating subtitles! This is a snippet of what I’ve been working on today in the impromptu ‘studio’ space- a quickly emptied out space in a small living room wall where I pushed the sofa to the side to create enough space for the video projection. There are three videos playing at once, all are the same videos of hand throwing the wheel, shaping round-shaped pottery. On individual screens, there are different subtitles, on left there is an ‘action’ based subtitle ([turning] & [creating shape]) and in the middle, there’s the abstract subtitle ([sound of shifting relationship] & [sound of remembering]) and on right there’s a ‘music’ based subtitle([Dramatic theme playing] & [♪♪♪]). This video is originally from Craft / Potting / Bookbinding Practice Film from Bexley Local Studies & Archive Centre located in London’s Screen Archives. I am going in the direction where I would like to experiment with the same video but with different subtitles to ‘alter’ the experience for the viewer – changing the perception of the event with the poetic language of the subtitle.

![Screen capture of 3 videos playing side by side, each individual video shows a pair of hands shaping brown clay pottery on wheel. Each individual video has white subtitles on a black background- the video on the left reads [throwing], the middle video reads [sound of emptiness in the room], the video on the right reads[dramatic theme playing]. All three videos are on black box.](https://vitalcapacities.com/wp-content/uploads/2021/06/Screenshot-2021-06-28-at-17.08.28-1024x317.png)

![Screen capture of 3 videos playing side by side, each video shows a pair of hands shaping brown clay pottery on the wheel. The individual videos have white subtitles on a black background. The subtitle on the left reads [gently holding], the middle subtitle reads [sound of listening inward], the right subtitle reads [soaring orchestra music]. All three videos are on black box.](https://vitalcapacities.com/wp-content/uploads/2021/06/Screenshot-2021-06-28-at-17.08.36-1024x318.png)

This week, I am planning to experiment with multiple different videos and show these simultaneously at a more playful pace. Please leave a reply to this post to comment or ask any questions if you have any! 🙂

I would like to share some subtitles I have collected in my notes on my phone.

Music-related: [Music and sound fades] [Soaring orchestra music] [Music intensifies] [Mysterious string music] [Somber Music] [Dramatic theme swelling] [Dramatic string music builds] [Music gets faster] [♪♪♪] [***]

Action-related: [humming] [screaming] [breathing] [footsteps approaching] [crying] [wailing] [chattering] [sighing] [sobbing] [yawning]

How can these ‘objective’ subtitle be poetic in its own way? Below are some I’ve wrote.

[Sound of shaping context] [Sound of shifting relationship] [Sound of still silence] [Sound of emptiness in the room] [Sound of seeing] [Sound of communicating gently] [Sound of listening inward] [Sound of whispering sweet nothings] [Sound of worlds shattering] [Sound of remembering fondly]

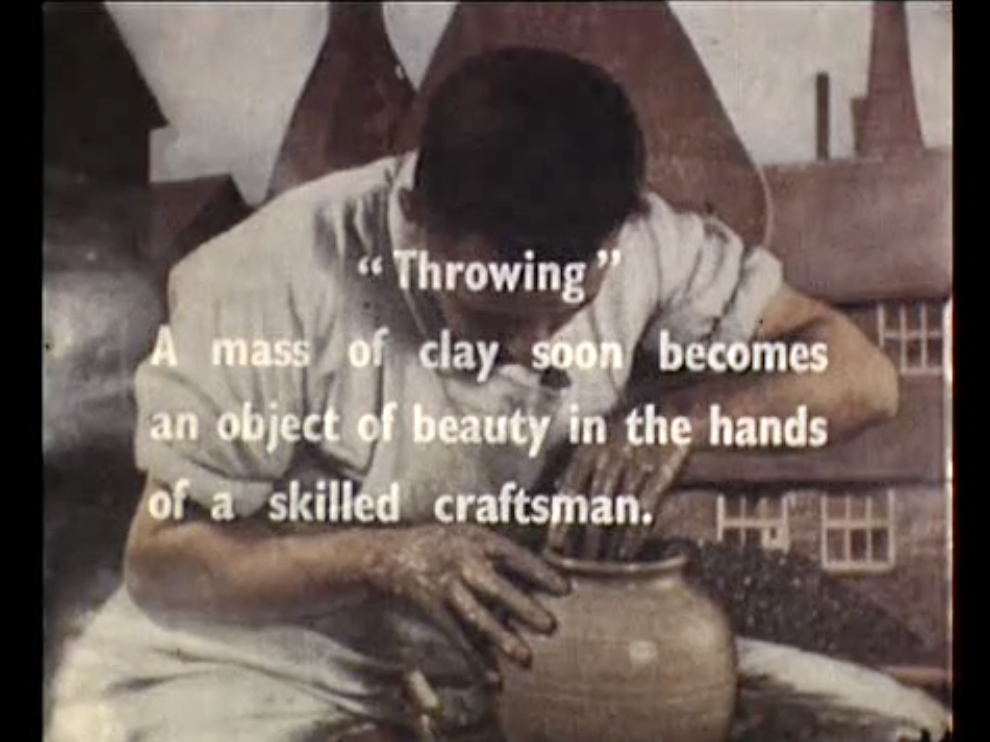

In this post, I’m going to explain why I am using visual of the ceramic throwing process and hands crafting. In the picture above, the definition of throwing is described as “A mass of clay soon becomes an object of beauty in the hands of a skilled craftsman”- this description resonates with my intention of turning the subtitle into something of beauty, and something that should always be included from an accessibility point of view. On a side note: the word “craftsman” should be changed to craftsperson or maker (unfortunately, some old film archives can reveal archaic gender stereotypes).

Craft videos are fascinating as they frequently show pairs of hands making objects from a shapeless form into something beautiful. For me, this formation presents a parallel between the idea of digitally shaping words into the language of subtitles, exploring its poetic nature. I’d like to thank Will McTaggart from North West Film Archive for the recommendation of the beautiful craft films by Sam Hanna—to view these, this website has a compilation of Hanna’s craft films- the link is here.

As we are reaching the end of June residency, I would like to focus on making a visual connection between videos of hands throwing, crafting, intertitles, and open captions using re-written subtitle language.

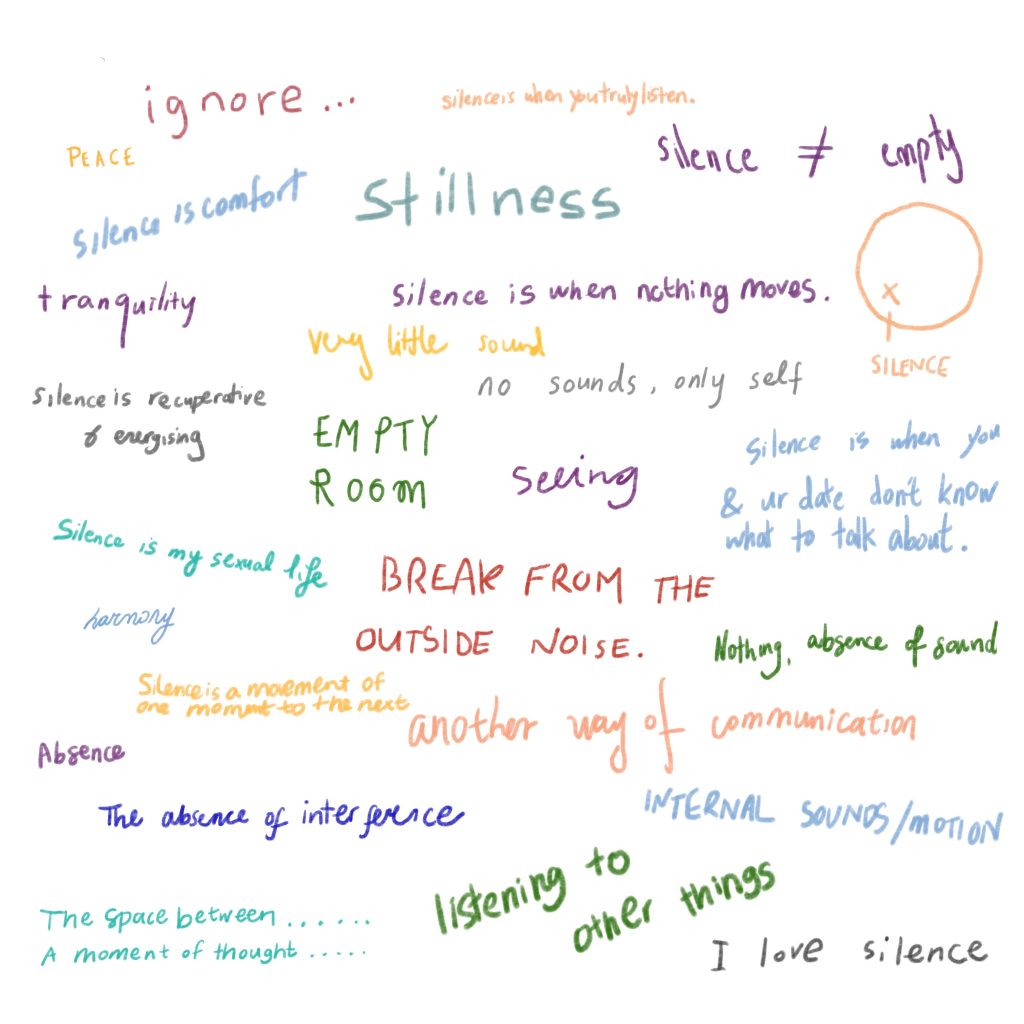

The page from [reading] [sounds] I posted earlier last week produced a question that has been pondering on my mind- what word could be used to represent silence in the subtitle?

I wanted to share the responses I have collected from people during my work in progress show during my MA year 2017. I had a sketchbook laid out in front of the audience asking the question “WHAT IS SILENCE?”

The answers I received were all different and made me realise that silence can be represented in a poetic and abstract way.

Below is the digital collation of selected answers from the sketchbook.

![Page 183 of the book [reading] [sounds] by Sean Zdenek - title of chapter "Captioned Silences and Ambient Sounds" Below the title, the paragraph starts with "As counterintuitive as it may sound, silence sometimes needs to be closed captioned. Captioners not only inscribe sounds in writing but must also account for our assumptions about the nature, production, and reception of sounds. One of our most basic assumption is hat sounds are either discrete (with a clear beginning and end) or sustained (continuous). Sustained sounds, including sounds that are captioned as continuous or repeating (e.g., using the present participle verb+ing, as in [phone ringing]) may need to be identified in the captions as stopped or terminated if it's not clear from the visual context. That is, if we can't see the phone being answered or the ring being turned off, the captioner may need to mark the termination of the ringing sound. We also assume as moviegoers that the world is never technically silent. Ambient noise provides context. True silence is rare on the screen. In the real world beyond the screen, the same assumption holds.Sound waves envelop hearing viewers even in "silence." The total absence of sound can only be achieved on Earth artificially in an anechoic chamber, a room designed to block out exterior noise and absorb interior sound waves. Designed to test product noise levels (and not human tolerance levels), the chamber reportedly causes hallucinations and severe disorientation in hearing visitors who spend even a little time in one (Davies 2012):"](https://vitalcapacities.com/wp-content/uploads/2021/06/IMG_2319-768x1024.jpg)

This chapter of the book starts with the sentence “As counterintuitive as it may sound, silence sometimes needs to be closed captioned.” I couldn’t agree more with this line because it is so easy for captioners to oversee the importance of including silence/ambient sound and the difference this makes for viewers to understand the story better. There have been several occasions where I could hear something happening on TV (ambient sound in films that adds to the atmosphere/scene) but no word appears in the subtitle section to give me an answer to my curiosity.

Can silence be captioned? How can one interpret silence into words?

“A large proportion of the U.K. television audience relies on subtitles. The BBC’s audience research team has run two audience surveys for us over the past two years. Each used a representative sample of around 5000 participants, who were questioned on that day’s viewing. The responses indicate that about 10% of the audience use subtitles on any one day and around 6% use them for most of their viewing. This equates to an audience of around 4.5 million people in the U.K., of which over 2.5 million use them most or all of the time. Importantly, not all subtitle users have hearing difficulties, some are watching with the sound turned off and others use them to support their comprehension of the program, while around a quarter of people with hearing difficulties watch television without subtitles.” (from the article published in 2017 titled Understanding the diverse needs of subtitle users in a rapidly evolving media landscape)

It is important to note that not everyone that uses subtitles identifies as d/Deaf or hard of hearing. In my recent research, I found an article written in 2006 discussing subtitles used by 6 million people with so-called “perfect” hearing. In the comments section, some individuals have shared why they enjoy using subtitles, their answers included that of providing a distraction for kids, learning English, or being able to multi-task etc. The link to the article is here.

Can subtitle capture emotions on screen? How does reading subtitle enhance the experience of film watching? When you read the subtitle, what kind of tone do you read in?

I wanted to share here my previous experimental project called Partial Gestures. This is a short 16 second video of a cropped Youtube video that shows only the subtitles that appear in two lines at a time:

“Implanted her at the age of four and a half that she would be able to develop near natural speaking skills. Have you met other deaf people who are 20 to 35 years old and who got an implant as an adult out of our 300 patients we”

Hand movements are partially cropped and hidden behind the subtitle to hinder the audience from understanding the context. Behind the subtitle, there is a conversation happening between two people, one of which is using American Sign Language to ask a question. This video is originally from a documentary called ‘Sound & Fury’ directed by Josh Aronson in 2000. You can find more information on this wiki page here. The original video can be found on Youtube on here.

This documentary shows the journey of two sets of parents discussing the future of their children in regards to how much cochlear implant surgery may be ‘beneficial’ or how it could separate the child from one’s Deaf family.

I found the film relevant as Cochlear Implant can be controversially seen as something that ‘erases’ Deaf identity because it usually encourages one to speak and hear instead of using the sign language. At the time of first watching this documentary, I also came across a book titled Made to Hear: Cochlear Implants and Raising Deaf Children by Dr. Laura Mauldin. The book is about the consequences of Cochlear Implant surgery for parents and medical professionals and I found it to be quite informative for me. You can find more info about the book on here. I must’ve been about 10 or 11 when I received the surgery and I do not remember anything particular about what hearing sound was like before switching to digital hearing. As my hearing started to deteriorate from the age of 4, I don’t have much recollection of listening the music with natural hearing. It was intriguing to learn about how a child gets to train the brain to reconfigure the way of listening with new cochlear implant device.

The Co-Designing the Sound Art with Cochlear Implant Users workshop I attended at V&A in 2016 has helped me to understand more about CI and its users’ experiences. Lead by artist Dr. Tom Tlalim, the workshop included a series of interviews conducted to discuss the varied experience of music within Cochlear Implant users. This website showcases multiple interviews and information about the project which can be found here.

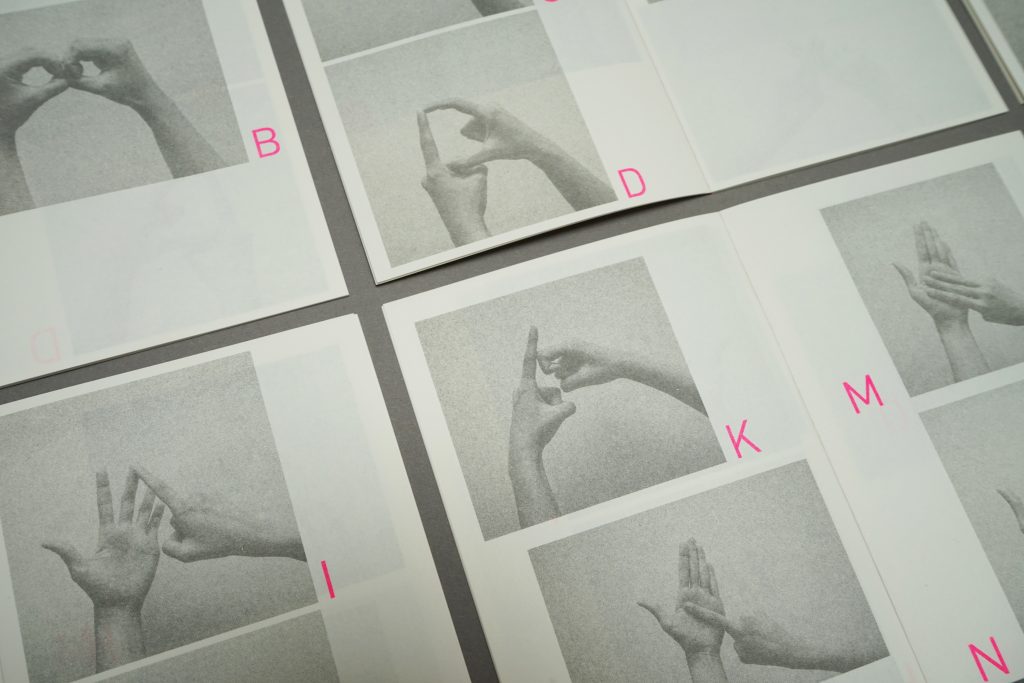

As I have been learning more about CI, I became naturally interested in the language of British Sign Language. The mini booklet I have made as a documentation of my journey into learning the British Sign Language alphabet through fingerspelling- it was printed by Berlin based Risograph printing studio WeMakeIt.

This booklet was selected to be part of Artist Self-Publishers fair 2020- as part of showcasing the book, I made Partial Gestures as the video experimentation to accompany this project.

I am looking forward to seeing how I can expand on this process of study and continue to think about the language of subtitle in engaging way through researching!

Remember when the Google glass came out years ago and ended up being not so popular? At the time, I wasn’t aware that the product had been designed to include the function of Live Captioning; while researching this I found this video of the product being used in a quiet setting.

There are many great resources you can use to transcribe speech to text on apps/browsers online. These resources work by either turning on the live transcribing function or uploading prerecorded audio files – a few I have used in the past are Webcaptioner, Google Transcribe App, Descript. (Each mentioned names are all hyper-linked which will lead you to its own websites)

Continue reading “Future of Live Captioning”When watching films, I have often paid attention to subtitles that show sound effects such as:

![Cropped image of film only showing the subtitle that says [Vehicle pulling up]. Behind the subtitle you can see cropped image of wooden table with paper stacks on it.](https://vitalcapacities.com/wp-content/uploads/2021/06/Screenshot-2021-06-08-at-09.26.28-1024x209.png)

![Cropped image of film only showing the subtitle that says [clock ticking]. Behind the text, there's blurred shape of someone's shoulder wearing a suit.](https://vitalcapacities.com/wp-content/uploads/2021/06/Screenshot-2021-06-08-at-09.26.55-1024x208.png)

BBC Academy has online guidelines for subtitles that would be shown across its platforms. I found this line interesting under the Music and songs section – “All music that is part of the action, or significant to the plot, must be indicated in some way.”

Description of music can prepare us for what is about to happen in a film – examples of this are subtitles such as EERIE INSTRUMENTAL MUSIC PLAYS, TENSE MUSIC PLAYS, SUSPENSEFUL MUSIC PLAYS. It can also tell us what the person is feeling – such as HEROIC MUSIC, DRAMATIC MUSIC SWELLS, SENTIMENTAL MUSIC.

I recently watched the well-known 1982 film Blade Runner, during which I noticed that while dramatic music was played, it was shown as (***) – how does (***) convey the atmosphere of the music that is being played? There are cases where the subtitle can be clever with showing context but its importance can be easily neglected.

Regulations for closed captioning started to be introduced in the UK in the 1990s to make video content accessible for d/Deaf and hard-of-hearing people. Whilst in the USA, the Americans with Disabilities Act (ADA) was passed in 1990, following which the majority of network providers of programs had to contribute part of their income on captioning to ensure access to verbal information on televisions and films. In 1993 with the Television Decoder Circuitry Act of 1990 going into effect, TV receivers with picture screens that were 13 inches or larger that were imported in the US had to have built-in decoder circuitry to show closed captions. Decoders used to be sold for $250 at Sears in the 1980s which meant they were not very accessible for some people. Closed captioning started out as an experiment intended only for people who were deaf, yet it became something of an everyday commodity that helped millions of people to connect. A brief history of CC can be found here.

When I was younger, I remember renting VHS tapes from Blockbusters with my family. Specifically, I remember trying to select ones that had the CC logo on them to ensure that I can watch them without worrying. Fast-forwarding to 2021, unfortunately, not all 100% of UK TV shows/films and online video services have subtitles. The UK charity The Royal National Institute for Deaf People (previously known as Action on Hearing Loss) currently has a campaign called Subtitle It! which has been tackling the issue since 2015.

There is still a long way to make existing/future web content fully accessible for everyone- but I am glad there are resources that we can learn from regarding accessibility.

Glass / Glas by Bert Haanstra (1958) is a short documentary that playfully shows the process of glassmaking. This is a compilation of stills featuring hands from the video for visual inspiration. I always have been interested in the movement of hands as they can give a surprising amount of information regarding one’s culture, emotions, and the context of the conversation that is taking place.

Thanks Laura Lulika for the reference!

When I was researching subtitles, I was surprised to learn about the vocabulary of Closed Captions and Subtitles.

Closed Caption is for viewers who cannot hear audio and includes sound effects.

The subtitle is for viewers who can hear audio but cannot understand the language and does not include audio effects.

Most countries outside the USA and Canada tend to merge the two words into one in film and media which explains why I found it confusing!

I also found that Open Caption means it is permanently embedded into the video itself- so it cannot be turned off by the viewer, but that means its style/size/colour can be determined by the creator ahead of time.

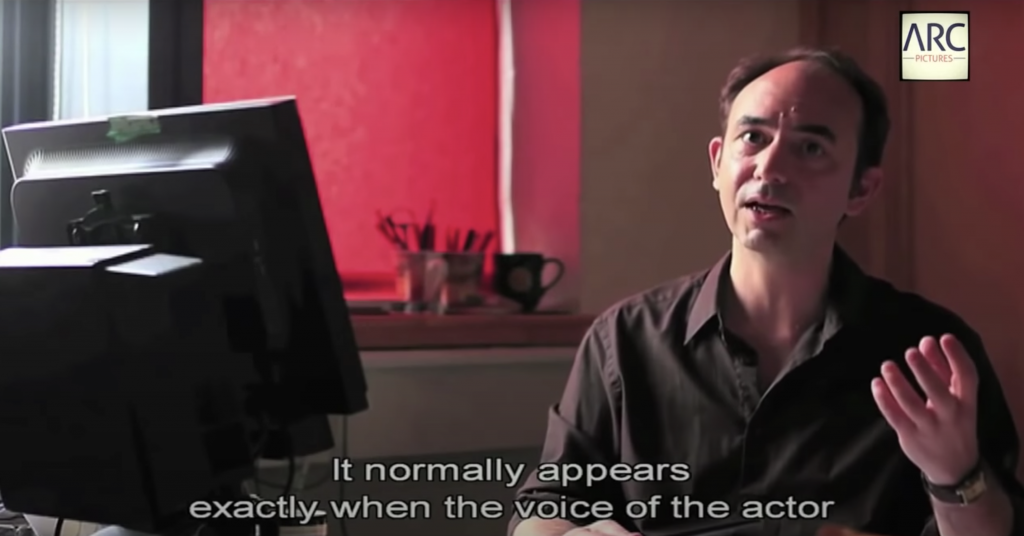

Continuing my research, I watched a short documentary called The Invisible Subtitler made in 2013. The documentary discusses the importance of the role of translating subtitles into different languages for films. It is available to watch on Youtube.

The act of translation always fascinates me as one always has to take everything into context to make the best translation. When I was learning English for the first time, I had to memorize definitions of new English words in Korean words. As I learned more about English, I realised that one English word does not always have a single Korean word when translated.

Like language, sound can also be translated in many different ways. In subtitles, the sound can be summed up into a single word or detailed description. It depends on the choice of subtitler or scriptwriter. There are times when the subtitle can just show as (MUSIC) or it can be showing in details such as the title of the music and its lyrics.

Yesterday I enjoyed spending the day exploring archival film footage found at London’s Screen Archives. One thing I have noticed during my research so far is the abundance of content – it’s great to see that so much is readily available. For the next few days, I will continue exploring the collection to get inspired for the visual research.

If there’s any suggestions or references I could research regarding subtitles, please let me know!

I was recently told about this program by a friend as they suggested it might be relevant to my practice. As it sounded super interesting to me, I was worried that it might just be a radio recording without subtitles. But then I was happy to find that there was a subtitled video for people like me – D/deaf people and hard of hearing. My relationship to radio has been pretty much non-existent, the only time I would hear it is when the car didn’t have any interesting music to play, my mom would always turn it on for background sound.

In this 28 min-long video, the Deaf poet Raymond Antrobus talks about his experience with radio, subtitles on TV, and translating sound within the hearing world. Words on the video are constantly flickering, as though we are watching on old static TV. Simultaneously, words are spoken in a flat monotone with interviews from artists and writers in their interpreter’s voices.

Throughout the video, commentaries are shown from Deaf artist (Christine Sun Kim) and Deaf poet (Meg Day), filmmaker (Lindsey Dryden), and caption maker (Calum Davidson), discussing what closed captions mean to them. I found this video really great as it really resonated with my experience and brought back my memories of constantly trying to find something on TV that provided subtitles. In particular, there was a reference by Lindsey Dryden to the film Dawn of the Deaf by Rob Savage. The film makes clever use of subtitles by partially showing them during a scene of a couple fighting using BSL, intentionally not showing the audience the full context of the argument. The mentioned short film can be found on Rob Savage’s website (the video is subtitled).

BBC Radio 4’s “Invention in Sound” can be found on the BBC website- the transcript is also available for download as well.

-Subtitles can easily change the context of video being shown.

-Subtitles can either tell us so much and so little.

-Size and placement of subtitles can be important as they can either hide or reveal what is happening on screen.

-How can the subtitles translate sound?

In my research posts, I would like to include questions, bullet-pointed lines of thinking, comments, and references. Please let me know if you have any questions!

During this week I am hoping to explore more into the unique language of subtitles and begin searching through film archives for more references. I wanted to ask everyone – what does sound mean to you? What is your experience with subtitles?