Over the past couple of years I’ve been thinking a lot about how computers see the world – through machine vision technologies and various data analysis systems – and how this shapes our lives.

Facial recognition technologies used by police are found to falsely identify and criminalise people (cases of mistaken identity are as high as 93% and in another study were 81%). CV-sorting and hiring algorithms are given the power to select and choose job candidates (Amazon’s tool became biased against female candidates, and HireVue’s claimed to make predictions based on the candidate’s tone of voice and facial movements. But my favourite example is one that decided the ideal candidate would be called Jared and would play Lacrosse…)

There are algorithms that will automatically move you further down a medical waiting list, those that decide whether you have access to housing or a loan, and countless more examples.

The decisions that computers make are hugely consequential; they can’t be assumed to be accurate or infallible.

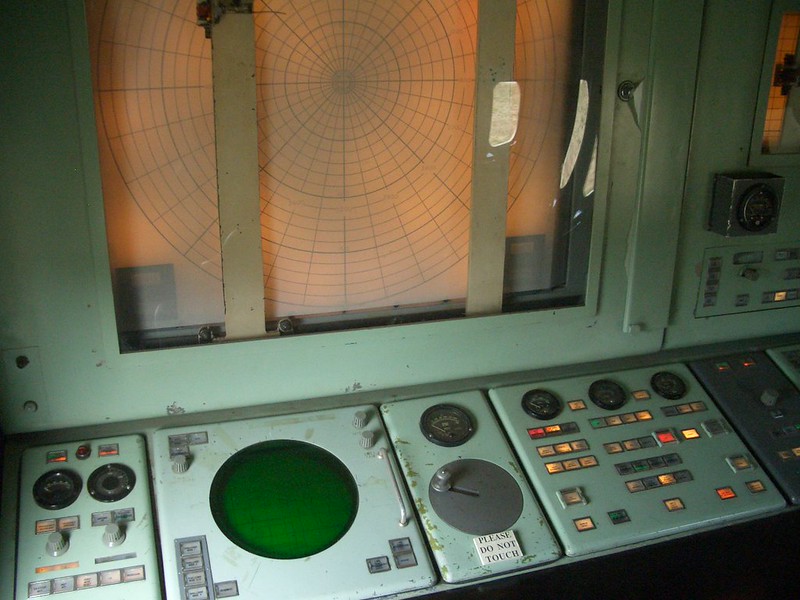

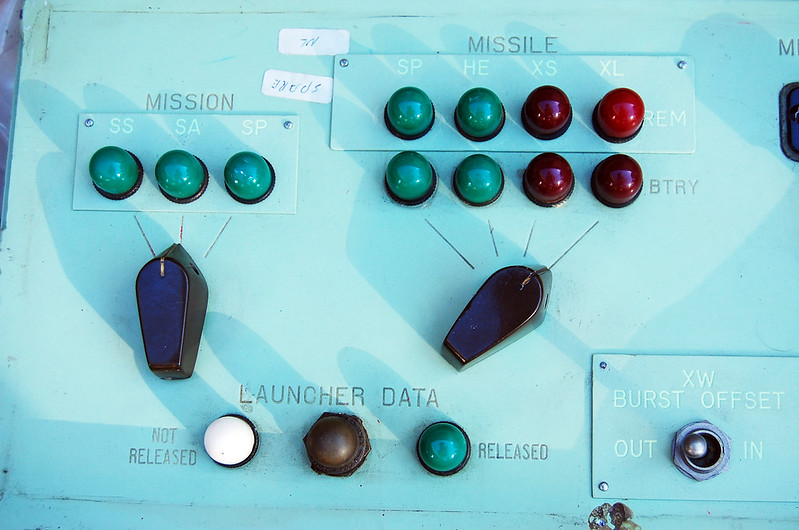

That’s why I was fascinated when I came across the 1983 story of Stanislav Petrov and how his questioning of what the computer claimed to see averted a nuclear missile strike capable of killing 50% of the US population. In this case, it was sunspots reflecting off high-altitude clouds that looked to the computer like an incoming missile attack. The system reported a high confidence reading that this was a definite attack, with no uncertainty.

The sun and clouds at the Autumn equinox became an act of war, in the eyes of the machine.

[Image attribution: Bass Photo Co Collection, Indiana Historical Society]