The contemporary world is becoming increasingly influenced by artificial intelligence (AI) models, some of which are known as ‘world models’. While these concepts gained significant attention in 2025, their origins can be traced much further back. Jürgen Schmidhuber introduced the foundational architecture for planning and reinforcement learning (RL) involving interacting recurrent neural networks (RNNs)—including a controller and a world model—in 1990. In this framework, the world model serves as an internal, differentiable simulation of the environment, learning to predict the outcomes of the controller’s actions. By simulating these action-consequence chains internally, the agent can plan and optimise its decisions. This approach is now commonly used in video prediction and simulations within game engines, yet it remains closely related to cameras and image processing.

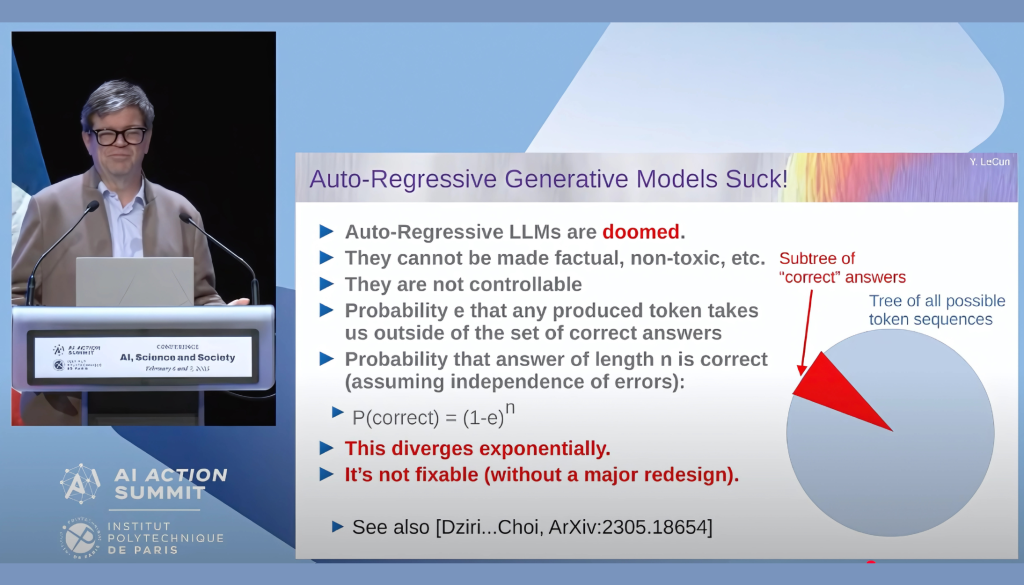

Despite the advancements made, a fundamental limitation first identified in 1990 continues to challenge the progress: the problem of instability and catastrophic forgetting. As the controller guides the agent into new areas of experience, there is a risk that the network will overwrite previously learned knowledge. This leads to fragile, non-lifelong learning, where new information erases older representations. Furthermore, Prof. Yann LeCun mentioned in his presentation ‘The Shape of AI to Come’ at the AI Action Summit’s Science Days at the Institut Polytechnique de Paris (IP Paris) that the volume of data that a large language model contains remains minimal compared to that of a four-year-old child’s data volume, as 1.1E14 bytes. One of the titles of his slides that has stayed in my mind is “Auto-Regressive Generative Models Suck!” In the area of reinforcement learning, the AI’s policies often remain static, unable to adapt to the unforeseen complexities of the real world — in other words, the AI does not learn after the training process. Recently, emerging paradigms like Liquid Neural Networks (Ramin Hasani) and Dynamic Deep Learning (Richard Sutton) attempt to address this rigidity. However, those approaches are still highly reliant on randomly selecting and cleaning a neural network inside, to maintain the learning dynamic and potentially improve real-time reaction and long-term learning. Nevertheless, they are still facing challenges in solving the problem of AI’s hallucinations. A fundamental paradigm shift for AI is needed in our time, but it takes time, and before that, this paradigm may already be overwhelming for both machines and humans.